Cheaper Information Doesn't Mean Cheaper Decisions

The deflationary force from AI is real. Who captures it is a different question.

In the early 2000s. A portfolio manager started most mornings with a note from a sell-side bank. An analyst had spent several days reading earnings transcripts, stress-testing model assumptions, and calling investor-relations departments. The resulting note changed how the manager positioned holdings before the open.

The note arrived through a soft-dollar arrangement (the fund routed commission dollars to the bank in exchange for research access). The cost was real and ongoing. The manager wasn’t paying for the data underlying the note. They could access the same public filings the analyst read. They were paying for the work of turning data into something they could act on.

That work (synthesizing, interpreting, ranking what matters, arriving at a conclusion) is what made the note worth paying for. It required a trained person, significant time, and judgment built up over years of doing it wrong and then correcting themselves.

A good LLM query today can produce something that looks a lot like that morning note. Not identical. Not as good on every dimension. But comparable enough that “worth $40,000 a year” is no longer the obvious conclusion.

This is what AI is compressing. Not the data layer. Not the decision layer. The analyst step in between.

Four Links Between Data and a Outcomes

Think of the path from raw signal to financial outcome as a chain with four links.

Data - raw measurements, transactions, prices, signals. Data in isolation is economically inert. A million car-speed readings on a highway are not traffic information. The average speed, broken down by time of day, is.

Information - data processed into something that can change a belief or support a judgment. The analyst’s note. The actuarial table. The legal brief. The credit risk score. This is where a large share of knowledge work actually lives, and where AI is producing its most direct cost reduction.

Decision - the judgment call that puts information into action. A portfolio manager adding to a position. A CFO approving capital expenditure. A doctor ordering a test. Decisions require context, accountability, and tolerance for uncertainty that information alone does not resolve. They are distinct from information.

Outcome - revenue gained, cost avoided, loss prevented. How decisions register on a balance sheet or income statement.

Much of the fear about “AI taking jobs” is really a concern about the second link. AI is not yet replacing the data-generating infrastructure, and in most high-stakes organizational contexts it is not the accountable decision-maker. It is compressing the cost of turning data into information (the analyst step), which was historically the most labor-intensive link in the chain.

The Deflationary Logic

If information is an input to almost every business decision, and the cost of that input falls substantially, then the cost of operating businesses should decline. This is broadly deflationary, at least in industries where information production represents a meaningful share of operating costs.

The historical parallel that fits best is the cost collapse in agricultural production. Farming employed roughly 40% of the American workforce in the early 20th century and about 2% by the early 2000s, with similarly low figures since.[1] That transition was not a catastrophe. It was a reallocation. Labor freed from food production moved into jobs that didn’t exist when the transition began.

A version of this played out in the private-market research industry within the past two decades. When I was at PitchBook, the product sold was aggregation and structure. Thousands of individual deal disclosures collected, standardized, and made comparable in a single database. Their VC Dealmaking Indicator, for example, was built on top of that. It surfaced deal-flow trends that required the full database to exist and would have been impractical to construct without it. The synthesis was what investors paid for. AI is now moving down the same curve, toward layers of interpretation and analysis that weren’t reached before.

The mechanism is similar for information. When routine synthesis gets cheaper, more decisions become economically viable, because the cost of gathering enough information to support each decision falls. That expansion of decision-making at the margin is likely to create demand for different work, not eliminate work in aggregate.

The evidence so far is consistent with that. Business software spending rose 11% in the fourth quarter of 2025, the fastest pace in nearly three years, as AI was simultaneously lowering what each unit of software output costs to produce.[2] Cheaper per-unit cost drove more demand, not less total spending. James Bessen at Boston University finds the same pattern across prior technology waves, from textile manufacturing in the 19th century to ATMs in the 1980s.

But the deflationary pressure does not distribute evenly. In competitive markets, cost savings eventually reach consumers through lower prices. In concentrated markets, they accumulate as margin first. The question of who captures the surplus from cheaper information is entirely separate from the question of whether cheaper information is deflationary.

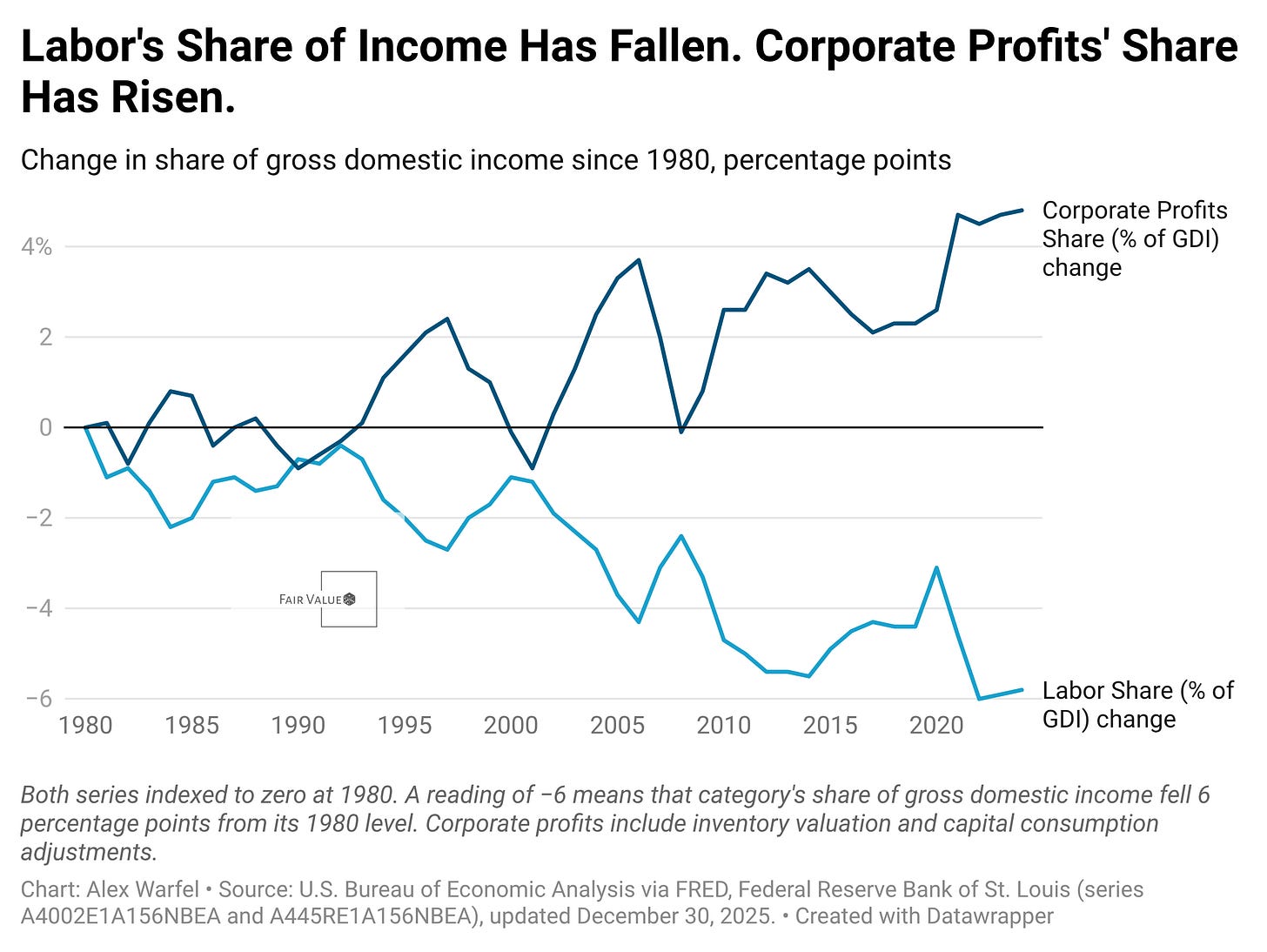

Labor’s share of gross domestic income (the total income earned across the economy) fell from about 57.7% in 1980 to roughly 51.8–51.9% by 2023–2024, while corporate profits’ share rose from about 6.7% to 11.5% over the same period.[3][4] AI adds another potential mechanism running in the same direction. The surplus goes to capital first, then maybe to consumers, and finally to workers. That last step depends on bargaining power more than on technology.

What Happens to Skill Premiums

The intuitive concern is that cheaper information means fewer analysts, which means lower wages for knowledge workers broadly. This isn’t obviously wrong, but it probably puts the focus on the wrong thing.

The more useful question is what happens to the complexity of the decisions that information supports. Historically, technology adoption has tended to raise skill premiums rather than compress them, because complexity compounds as tools improve.

The college-to-high-school earnings gap in the United States doubled between 1981 and 2005, from 48% to 97%.[5] This period coincided with rapid technology adoption across the economy. Technology made some tasks cheaper but raised the cognitive bar for the tasks that remained valuable.

Spreadsheet software offers the clearest precedent at the knowledge-work level. When Lotus 1-2-3 and Excel arrived in the early 1980s, bookkeeping jobs shrank. Accountant and financial analyst jobs (the roles that used the same tools to ask harder questions) grew even more.[2:1] The tool commoditized the mechanical work. Demand for judgment about what the numbers meant rose to fill the space. In 2024, young computer-science graduates earned 63% more than the typical young graduate, up from 47% in 2009.[2:2] The premium for knowledge work hasn’t compressed during the AI era. It has continued to widen.

The Black Death offers a useful example showing how this works in reverse. When roughly a third of Europe’s population died in the mid-14th century, raw labor became scarce and wages for common workers rose sharply. Unskilled wages rose faster than skilled wages in many documented settings, compressing skill premiums. Not because technology changed, but because the quantity of labor fell suddenly.[6] That’s a different mechanism. AI is not reducing the quantity of people available to do knowledge work. It is making a specific kind of knowledge work cheaper to replicate. If AI functions as a substitute for routine synthesis and a complement to complex judgment, the premium on judgment rises.

The caveat is that “complex judgment” is not a stable category. Tasks requiring genuine judgment in 2022 may look like routine synthesis by 2028. The floor keeps rising as the tools improve. That is uncomfortable for workers planning careers, but it is distinct from the claim that skill premiums collapse. Historical evidence from prior technology cycles runs the other way.

Which Link in the Chain Describes Your Work

For individuals, the practical question is which link in the chain describes your actual work.

If your primary function is at the data layer (collecting, cleaning, and organizing raw information), the pressure is real and has been building for years before AI accelerated it.

If you are primarily at the information layer, the picture depends on what kind of information you produce. Consider two analysts at the same firm. The first spends most of their time building formatted data tables and writing standard-structure reports. The second spends most of their time deciding which questions are worth asking and pressure-testing where the model assumptions are fragile. AI compresses the first analyst’s workload significantly. It gives the second analyst better raw material to work with. The work that was always most valuable was always the judgment embedded in the analysis, not the formatting. AI is making that distinction visible.

If you are at the decision layer (where novel situations, irreversibility, and accountability are involved), AI is more likely to be a tool than a substitute, at least for now.

The Question Investors Should Be Asking

For investors, the surplus capture question matters more than the technology question.

When a key input gets cheaper, the value that used to flow to its producers has to go somewhere. In competitive industries, some of that value eventually reaches clients through lower prices. In concentrated industries, it stays as margin. The early pattern consistent with this is that firms are capturing AI efficiency gains in profit guidance faster than those gains are showing up in the prices they charge, though a full accounting will take years of data.

The investor question is not whether AI will lower the cost of information production. It probably will. The investor question is which companies sit above that cost compression and capture the margin, and which sit below it and face competitive pressure from buyers who now have better analytical tools of their own.

Incumbents with pricing power and deep customer relationships are better positioned to capture the surplus. Smaller operators in more competitive markets (where buyers can now do more of their own analysis) face more price pressure from the same technology.

The Thing Most Fear Narratives Skip

The anxiety about AI and work usually presents as a quantity problem. How many jobs will remain? The harder and more important version is a price problem. What will knowledge work pay, and who captures the surplus when information gets cheaper?

Being informed enough to make a sound business decision has always been expensive. Getting informed is getting cheaper. Those are not the same thing with the same implications.

The layoffs in knowledge-work industries over the past few years also deserve scrutiny before being attributed to AI. In Challenger, Gray & Christmas tracking, AI-attributed cuts have been a small fraction of total announced layoffs.[7] A 2025 working paper testing the “ChatGPT shock” finds no statistically significant increase in tech layoffs attributable to it.[8] Since the Fed began rapid rate hikes in 2022, tighter financial conditions and a post-pandemic hiring correction have coincided with layoffs across tech and other knowledge-work-heavy firms. Rates, constrained venture capital, and pandemic-era overhiring are all well-evidenced contributors. AI is frequently cited in layoff announcements, but cited reasons and causal attribution are different things.

Cheaper information is real. The reallocation that follows is real. But the shape of that reallocation (who bears the adjustment cost, who captures the surplus) is not determined by the technology. It is determined by market structure, bargaining power, and how quickly complexity rises to meet new tools.

That’s the part most of the fear narrative skips.

This post is general education only and not financial advice. Past economic patterns do not guarantee future outcomes.

Footnotes

Carolyn Dimitri, Anne Effland, and Neilson Conklin, “The 20th Century Transformation of U.S. Agriculture and Farm Policy (EIB-3),” USDA Economic Research Service, June 2005. Available at https://www.ers.usda.gov/sites/default/files/laserfiche/publications/44197/13566_eib3_1.pdf. The report summarizes agricultural employment share at roughly 41% in 1900 and approximately 1.9% by 2000.

Greg Ip, “Tech Has Never Caused a Job Apocalypse. Don’t Bet on It Now.,” Wall Street Journal, February 27, 2026. Available at https://www.wsj.com/economy/jobs/tech-has-never-caused-a-job-apocalypse-dont-bet-on-it-now-d192b579?mod=hp_lead_pos11. Sources cited within include: bookkeeper/accountant employment analysis and software demand data from James Bessen (Technology and Policy Research Initiative at Boston University); computer-science graduate earnings premium from Connor O’Brien (Institute for Progress).

Wall Street Journal, “The Big Money in Today’s Economy Is Going to Capital, Not Labor,” February 9, 2026. https://wsj.com/economy/jobs/capital-labor-wealth-economy-2fcf6c2f

U.S. Bureau of Economic Analysis. “Shares of Gross Domestic Income: Compensation of Employees (A4002E1A156NBEA)” and “Shares of Gross Domestic Income: Corporate Profits with Inventory Valuation and Capital Consumption Adjustments (A445RE1A156NBEA).” FRED, Federal Reserve Bank of St. Louis, updated December 30, 2025. https://fred.stlouisfed.org/series/A4002E1A156NBEA and https://fred.stlouisfed.org/series/A445RE1A156NBEA

David H. Autor, “Skills, Education, and the Rise of Earnings Inequality among the ‘Other 99 Percent,’” Science 344, no. 6186 (May 23, 2014). Available at https://economics.mit.edu/sites/default/files/publications/skills education earnings 2014.pdf. The 48% to 97% figure refers to the weekly earnings premium for college graduates relative to high school graduates. Also cited in Walter Scheidel, The Great Leveler, Princeton University Press, 2017, Kindle page 412.

Remi Jedwab, Noel D. Johnson, and Mark Koyama, “The Economic Impact of the Black Death,” George Washington University / IIEP Working Paper (2020). Available at https://www2.gwu.edu/~iiep/assets/docs/papers/2020WP/JedwabIIEP2020-14.pdf. The paper documents examples (including Florence 1348–1350) where unskilled real wages rose faster than skilled wages following the plague, implying skill-premium compression in those settings. Evidence varies by region and skill category.

Challenger, Gray & Christmas, “Challenger Report: December 2025,” January 8, 2026. Available at https://www.challengergray.com/wp-content/uploads/2026/01/Challenger-Report-December-2025.pdf. Challenger tracks self-reported reasons for announced layoffs; AI-attributed cuts have represented a small fraction of total announced cuts in recent reporting periods.

Manhal Ali, “Narratives vs. Numbers: A Textual and Quasi-Experimental Analysis of AI’s Early Impact on Tech Layoffs,” SSRN, 2025. Available at https://papers.ssrn.com/sol3/papers.cfm?abstract_id=5987016. The paper finds no statistically significant increase in tech layoffs attributable to the ChatGPT shock, while documenting that AI has become a prominent rhetorical justification in layoff communications.

Completely agreed with what you outlined. Beyond the core points, I suspect that the rise of AI-native skills will actually expand the TAM across these domains. By lowering the barrier to entry and increasing output quality, we’ll likely see a net increase in SWE, PM, and general tech roles over time. The 'displacement' narrative often ignores how technology historically creates more work by making new types of projects viable